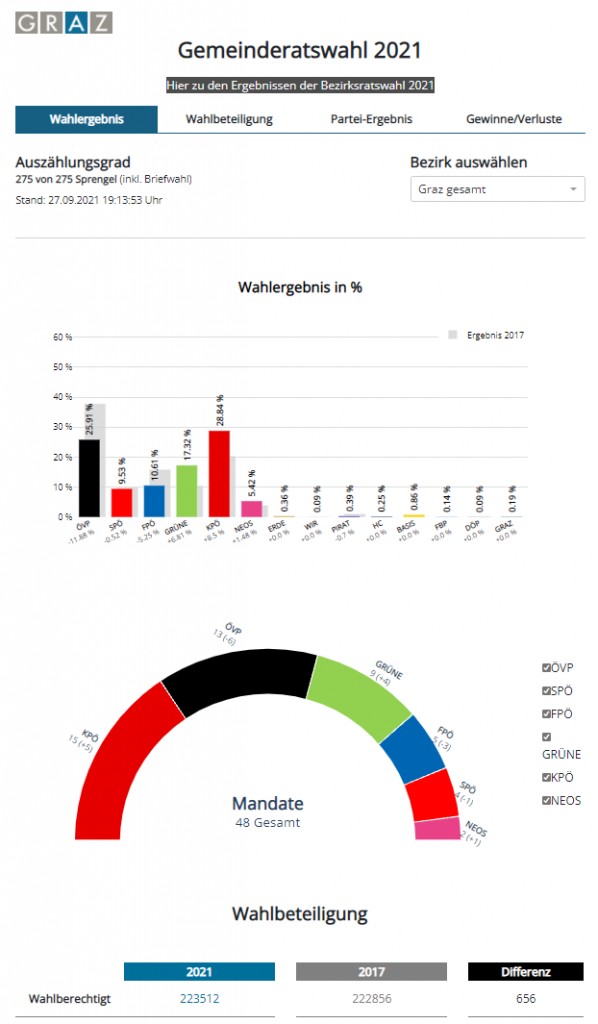

The electoral authority would like to have a public real-time dashboard with the current election results for an early municipal council election at short notice.

There are 3 days for a solution!

Is that possible?

Sounds exciting and challenging for that short time.

Well, the end of the project cannot be postponed, because the election will take place in any case - on Sunday next week ...

Inhalt

Starting position

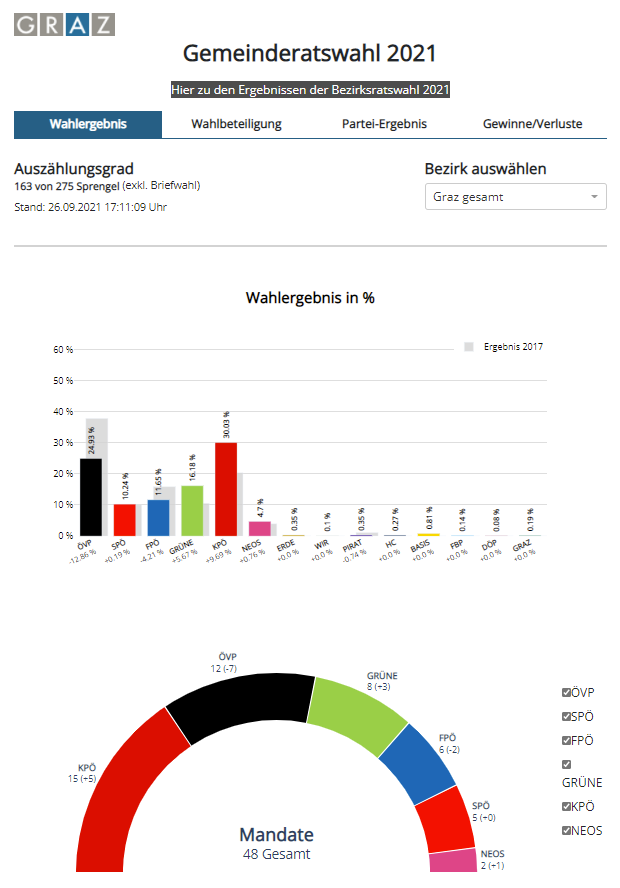

Every 5 minutes a file in JSON format is delivered to an FTP server with all preliminary election results, current graphics are to be displayed on a public website, with some filter options.

Solution

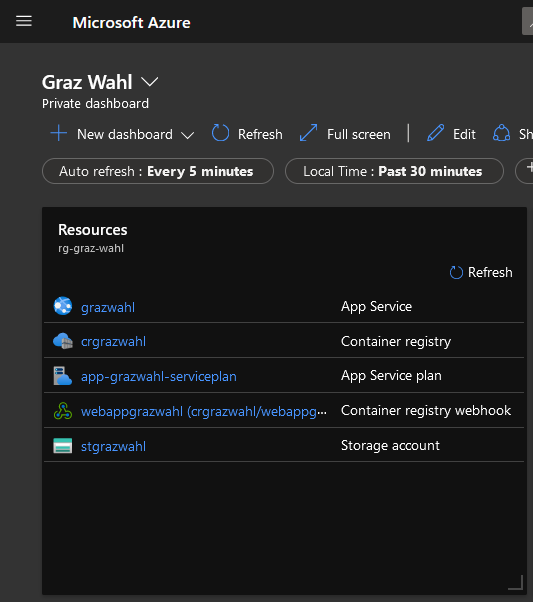

As a cloud-only application in Azure, all the necessary services are available.

We decide on a Python application that runs as a container in a web app.

How is the data processed?

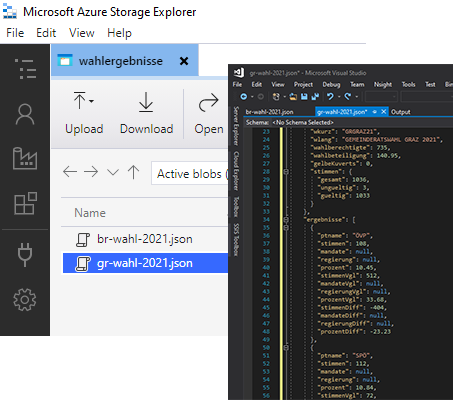

The JSON file is sent to a BLOB-Storage .

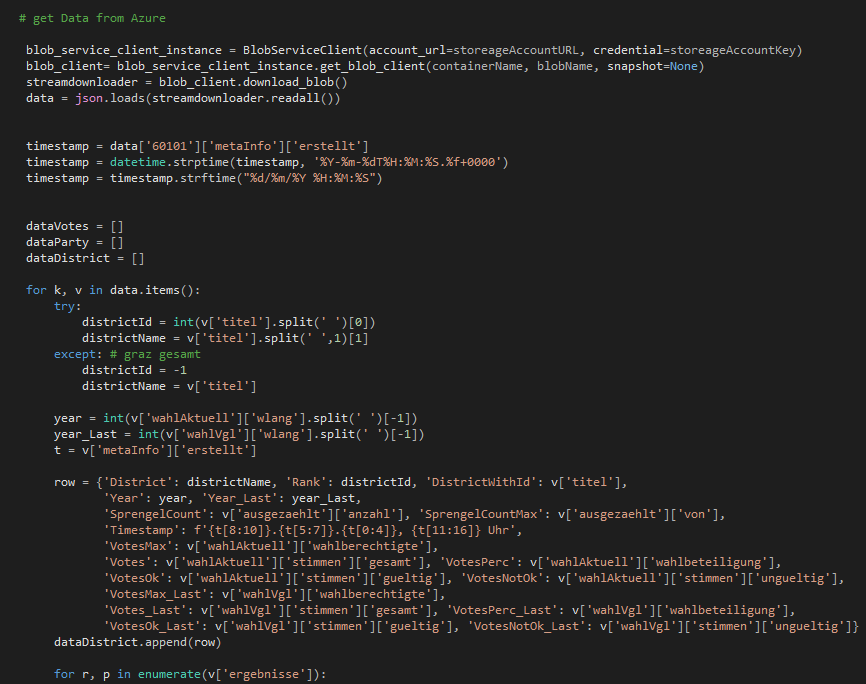

The Python-Script reads and processes this data from the Azure Storage.

How are the results presented?

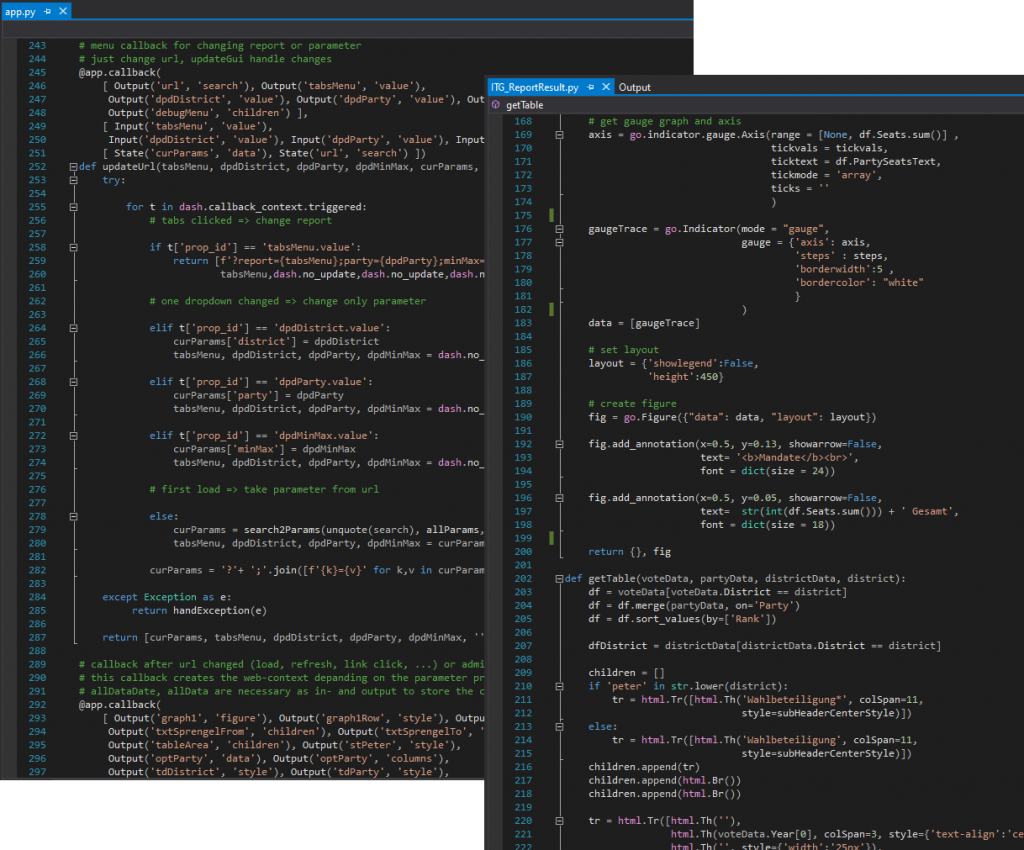

The Python application enriches the raw data with appropriate style tags and formatting and generates it with Plotly for individual graphics, which Dash delivers.

The menu and filter controls are also available in Python as Dash-Responsive .

How does the application get onto the web?

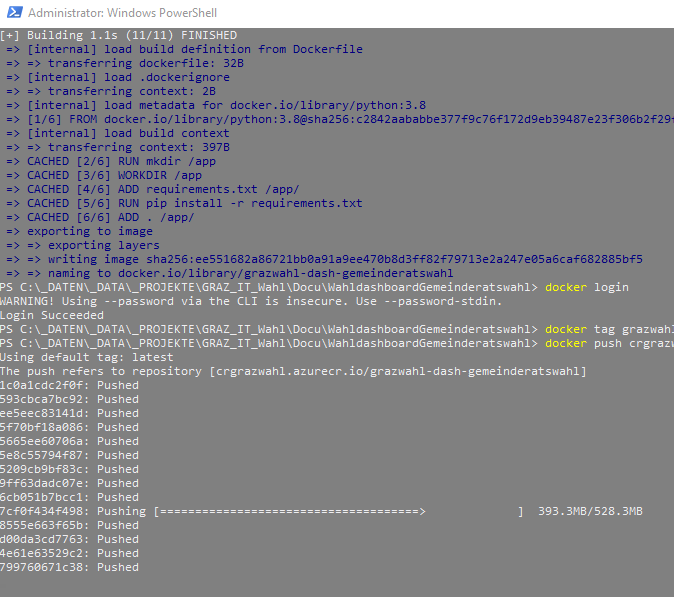

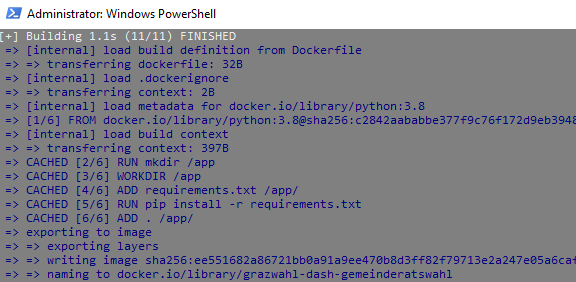

The Python application is packaged into a Docker-Container and will be transferred to a Container Registry pushed in Azure.

How does the website run with a container?

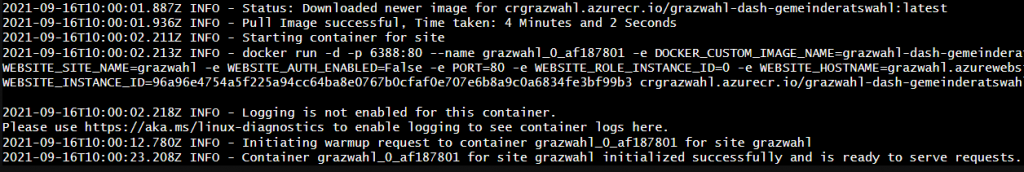

An Azure WebApp holds the container image and is now available for web requests, the output of the WebApp is already the finished rendered HTML with all graphics, always with current data.

What if something changes in the application?

After the adjustment, a local Docker-Image is generated and pushed again, a WebHook ensures that the WebApp receives the changed image of the Registry .

What happens with 1,000 simultaneous page views?

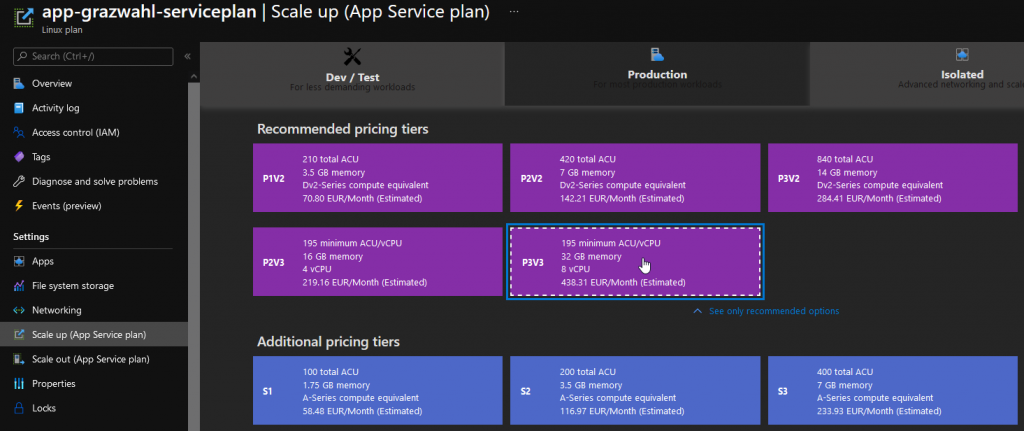

The Serviceplan the WebApp can be scaled, depending on the traffic.

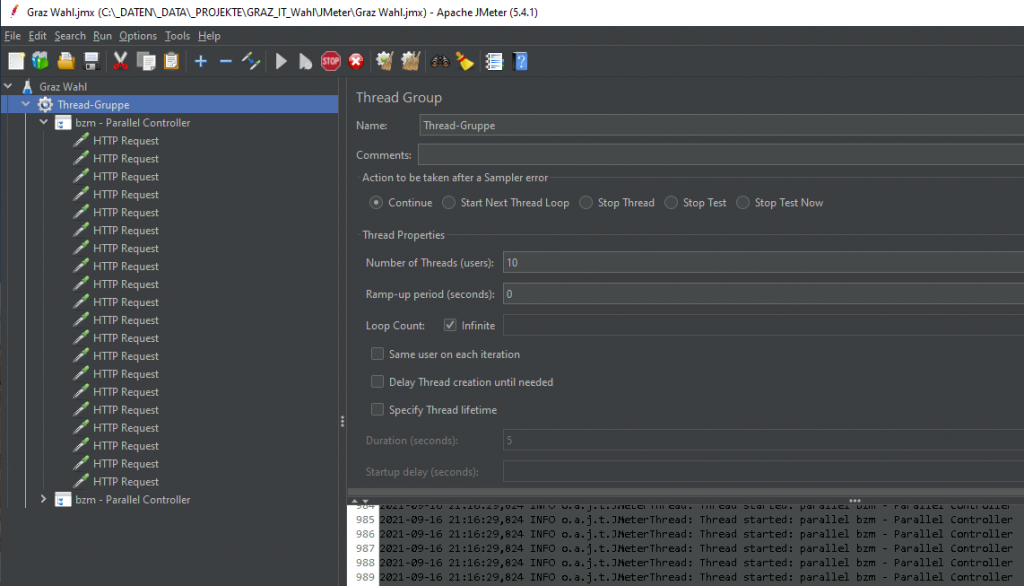

It is certainly not a bad idea to test the app beforehand, so a test with 1,000 simultaneous web requests is created with JMeter.

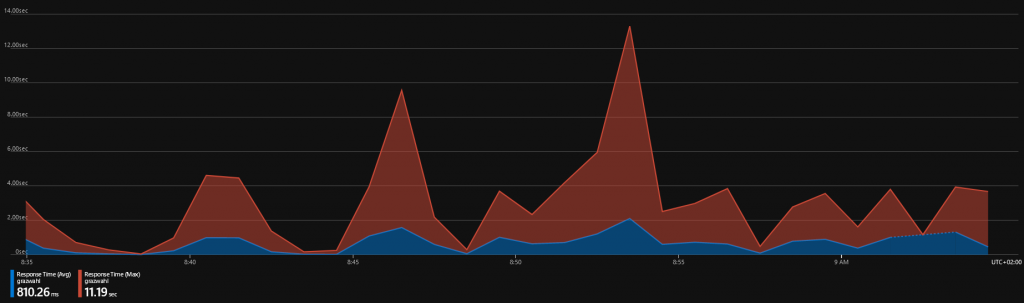

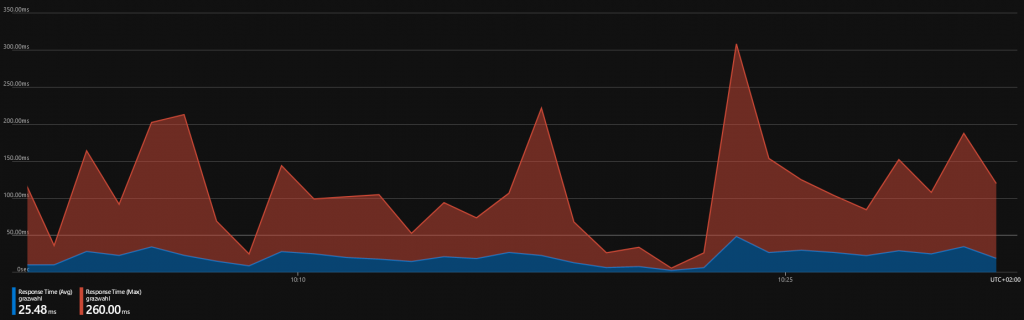

The simplest and cheapest scaling shows very good response times with 10 accesses, with 1,000 it looks completely different, all requests are processed without any problems and CPU / memory are not at the limit either, but the response time is already 1 -3 seconds.

A higher scaling results in very good access times of 25 ms.

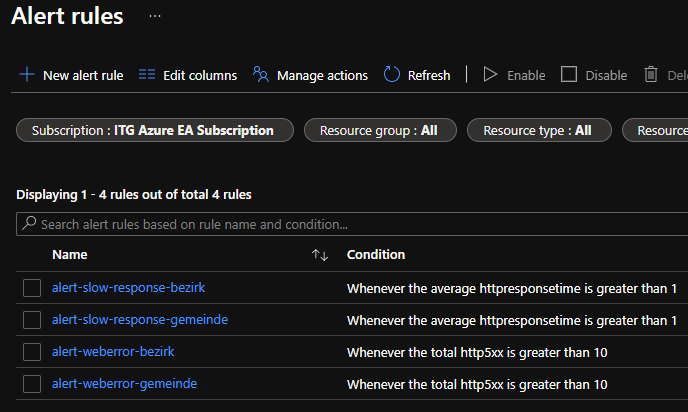

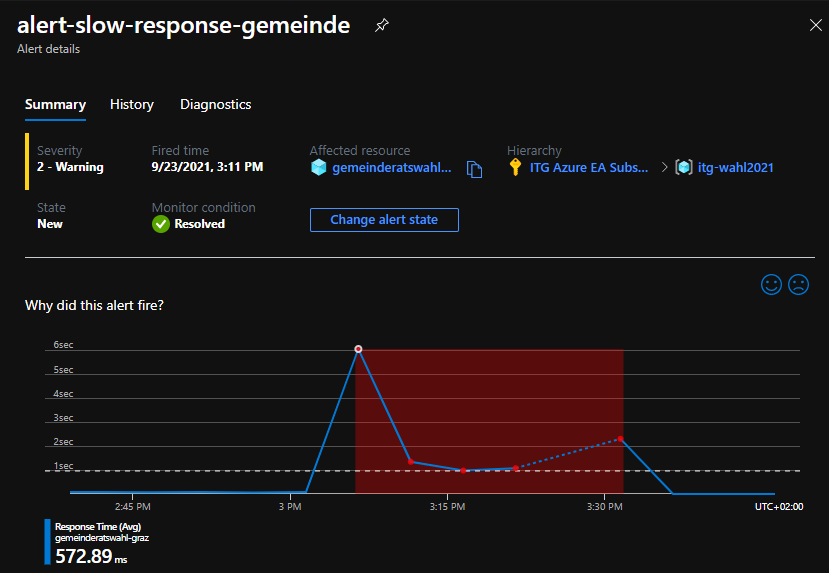

Additionally there will be Alerts set up to be able to react quickly - if the access time exceeds 1 second or if more than 10 web errors occur in 5 minutes, an SMS is sent.

How did it actually go on election day?

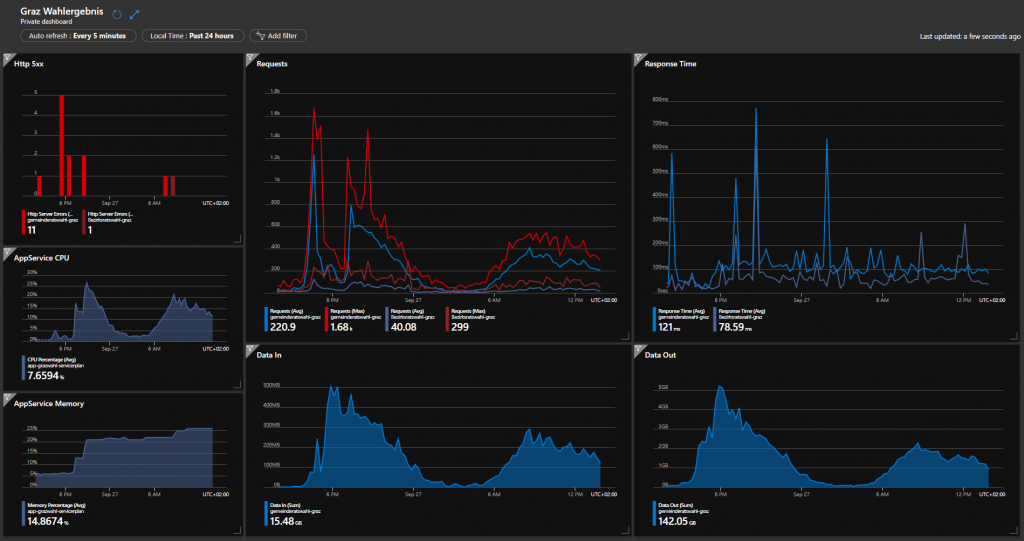

The first counts will be publicly available from 4:00 p.m., so this expected peak was upscaled.

The tension rises...

After the 4:00 p.m. peak, another one came at 7:00 p.m. with the TV news and then the next morning.

The tested 1,000 requests were also reached with around 1,400.

The ideal access times of 30ms - 100ms, there were also practically no server errors.

But now something should be adapted to the processing and also small corrections in the layout - during operation.

This also went smoothly, with practically no downtime - the app was adapted locally, pushed as Docker and automatically pulled into the app with the WebHook.

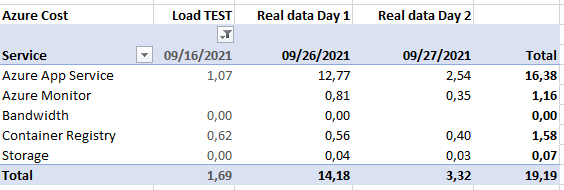

And the cost?

Operation in the Azure cloud is more than manageable.

Conclusion

A simple web application can be developed very quickly and set up relatively easily as a cloud-only solution in Azure.

A big advantage is the scaling depending on the traffic and, as in this case, also for temporary projects that are switched off after a few weeks and no longer cause any costs.

In addition to the political sensation, this mini-project was also a success.

Share your experience with a comment below!

Leave a comment