Inhalt

Continuation of part 1

Mass user test for Power BI - why and how you can find out in Part 1…

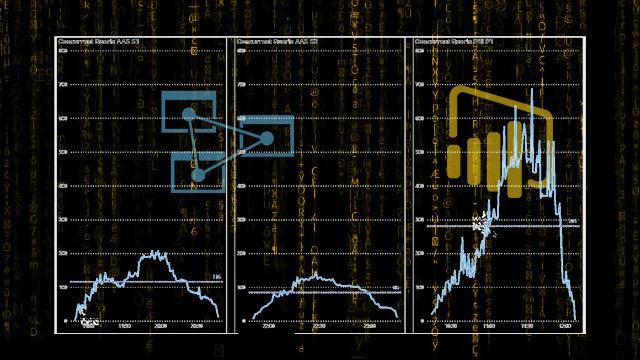

Comparison of AAS vs. PBI

During the test, I also wanted to establish the basics as to whether an AAS (Azure Analysis Services) has an advantage over Power BI Premium, because I'm biased here, it is the same technology, but Power BI is more or less a "black box" and instinctively I like to have all backend services “under one roof” in Azure under control and well monitored and only the reporting in Power BI.

Therefore, all tests run on an identical model and also the same scaling against AAS S1 and Premium P1.

Results

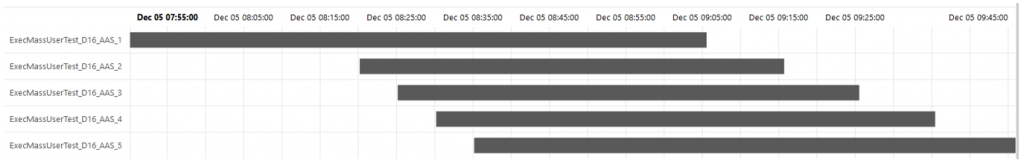

Great, the mass test is running - with ADF and SSIS, a strong IR and even in the full configuration up to 750 simultaneous processes (DAX queries), against Azure Analysis Services and against Power BI Premium Dataset.

Now I just need to analyze the results ...

But how?

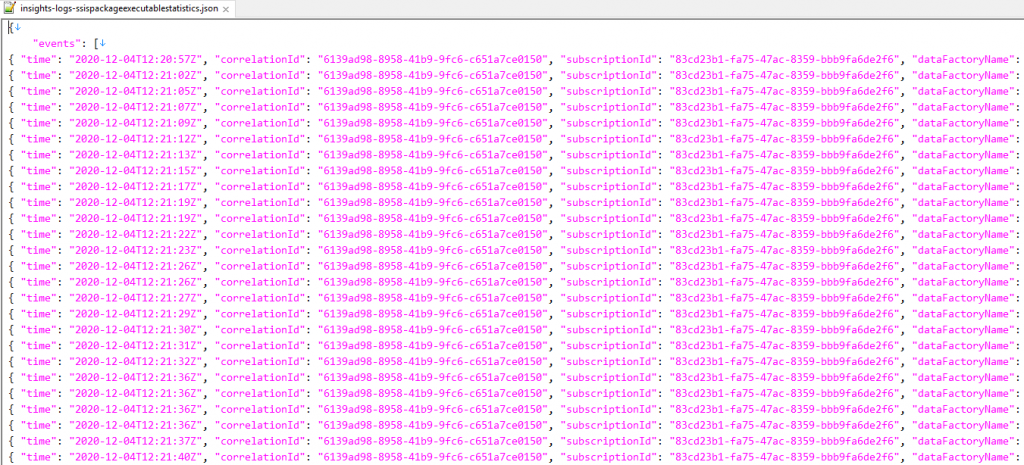

SSSI logs

SSSI logs

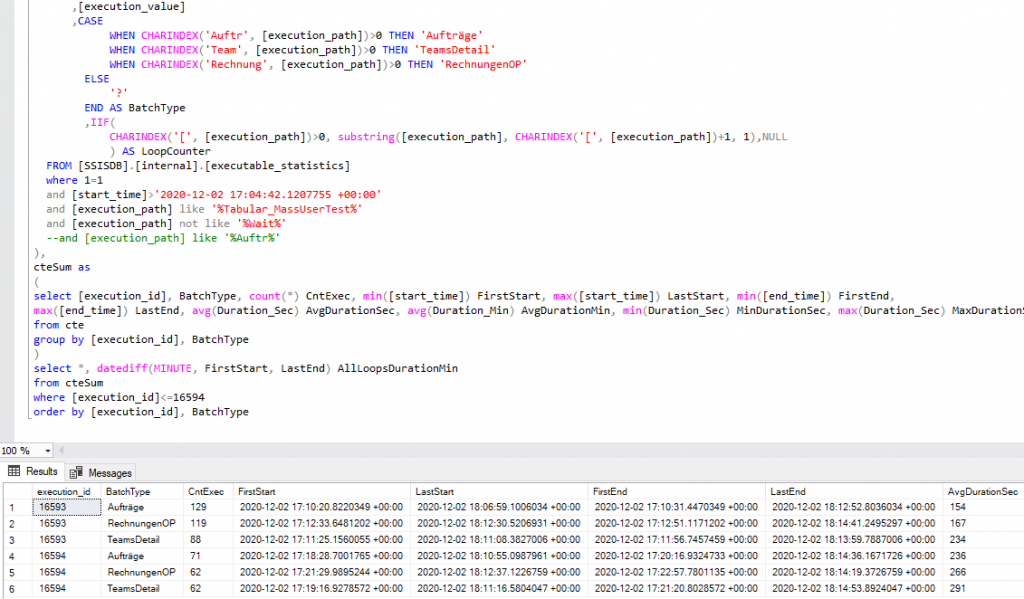

A lot is logged by SSIS, which ends up in the SSISDB or in the file system, there you can evaluate it a bit cumbersome but with SQL.

But, from a certain point in time on, nothing arrived here, the logging system was overloaded or deadlocks - it is probably not thought that 750 processes are running.

No results without full logs!

ADF Diagnostic

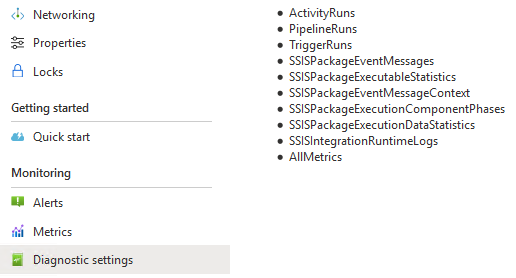

So I tried it differently, with the diagnostics of the Azure Data Factory.

These logs are essentially the same SSIS logs plus those of the ADF, and it is obviously not a problem to stream all processes in parallel to a datalake.

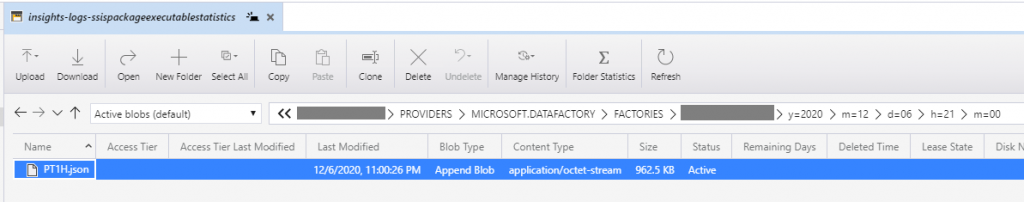

The logs are partitioned into “hundreds” of sub-folders, as is usual on the Datalake with many files and parallel processes.

But that's not a problem either, because with Power BI you can easily read in an entire folder recursively and Power BI can also resolve JSON - and then the evaluation can begin ...

Unfortunately not. Power BI denied!

ADF Diagnostic logs

If you want to import all of the files you will not have luck because the log is an invalid JSON! (I've been discussing this with Microsoft for months).

It is a line JSON which as a whole has no valid structure, so I copied the files together manually because I wanted to finally (!!!) see the results.

By the way, if you work with large files and also want to do “Find & Replace” inside, you will quickly reach your limit with Notepad ++ or something similar - I recommend: EmEditor – impressiv!

After the import and some tinkering in PowerQuery, the data can be analyzed.

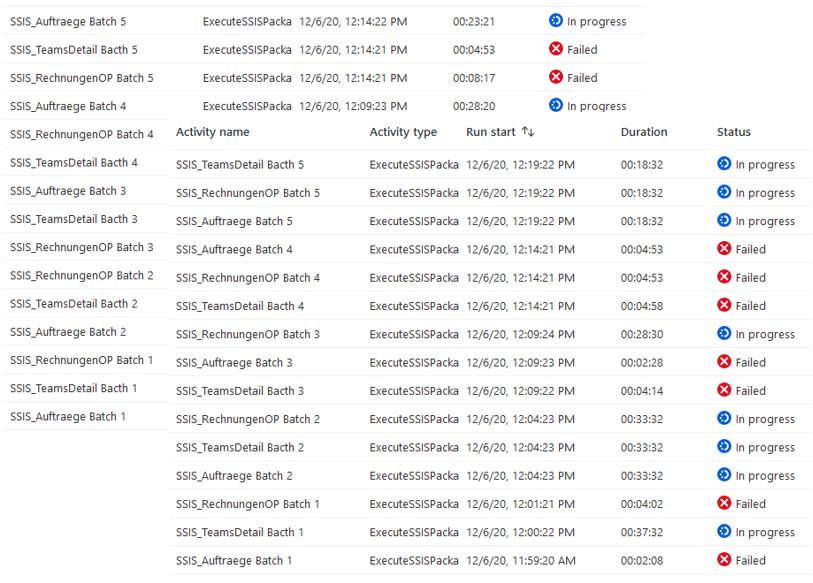

Azure Datafactory Log

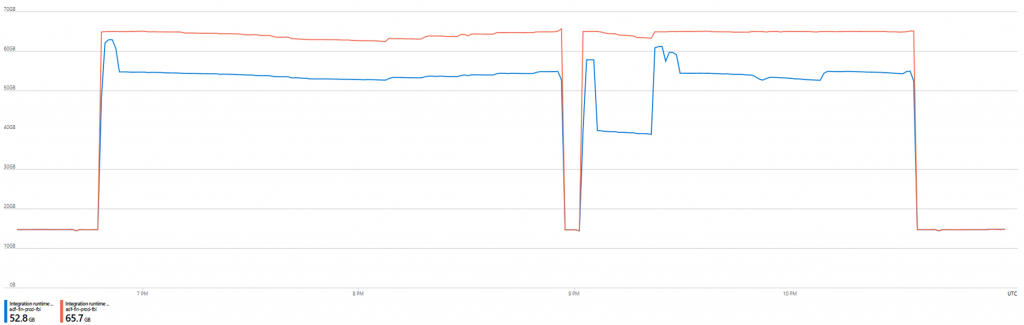

The ADF log also provides some information about which process ran and which went wrong, how stressed the IR is, etc.

RESULT

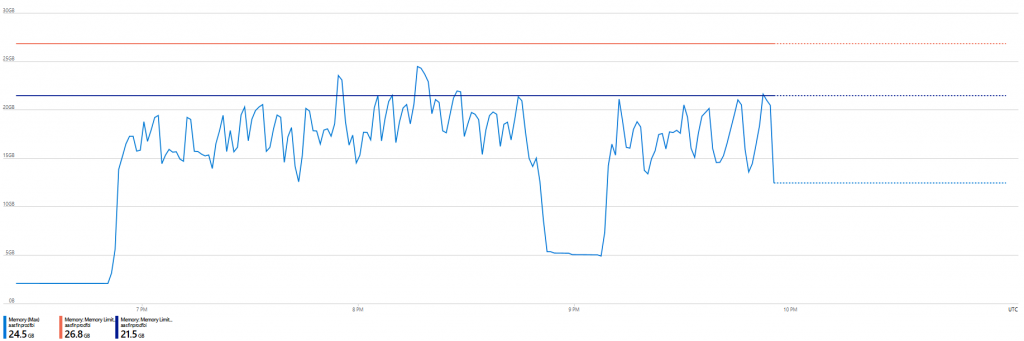

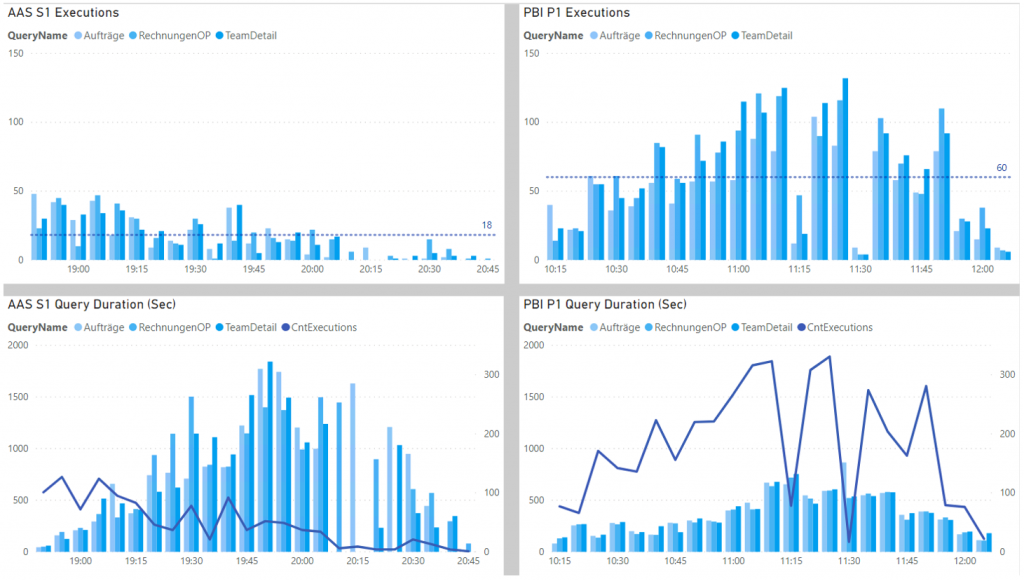

Azure Analysis Services (AAS S1) is very stable, practically no failures, but has a significantly lower throughput.

The memory utilization is consistently high, with an increasing number of processes everything remains in operation.

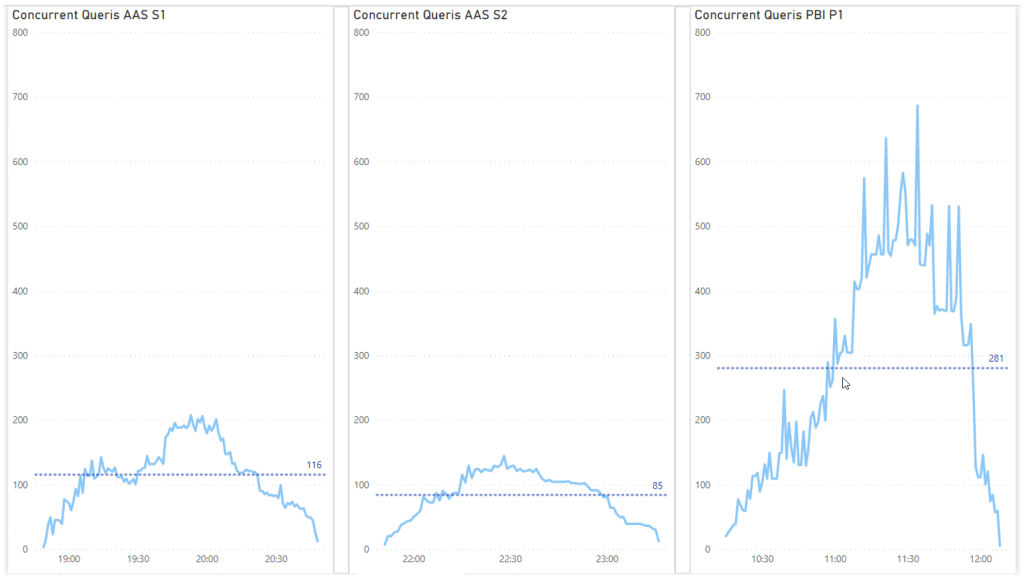

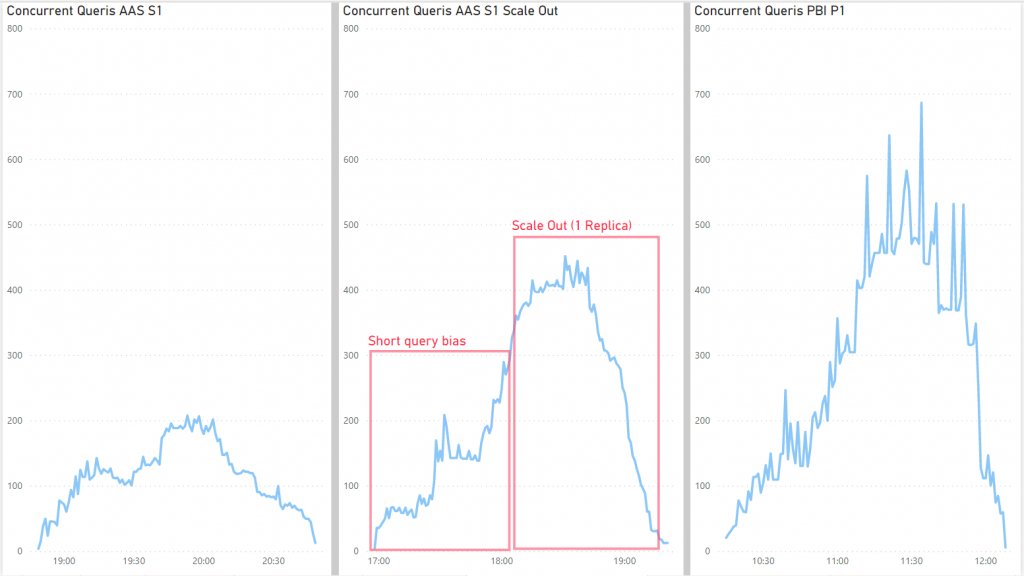

AAS manages 200 simultaneous queries, an average of 120 (per 5 minutes).

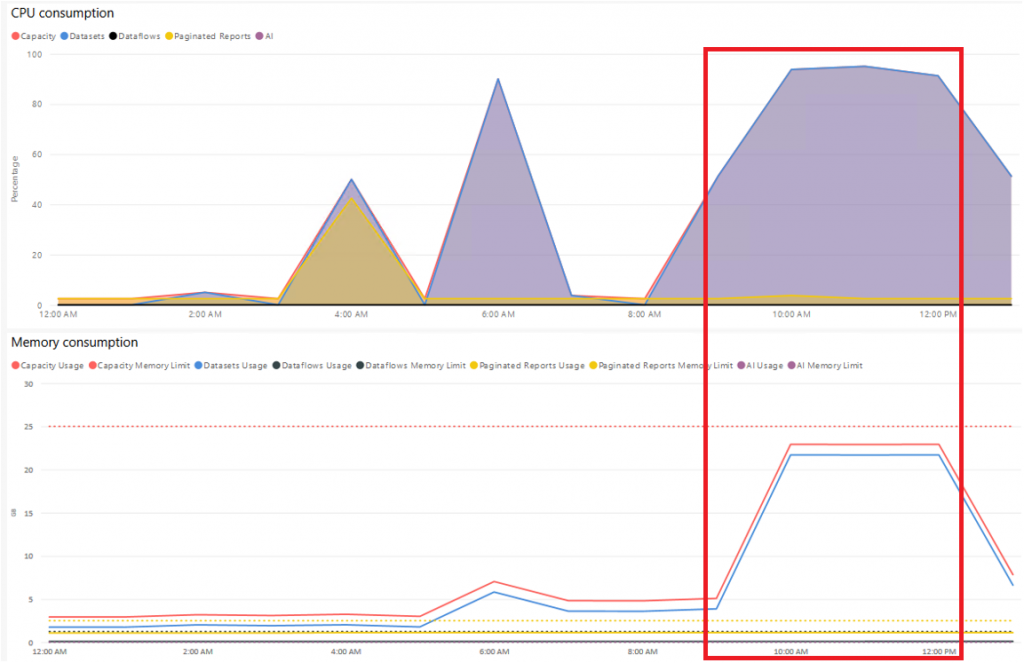

Power BI Premium (P1) has some failures of individual queries, takes a long time to "calm down" from the maximum memory usage, but has a very high throughput.

The memory usage and CPU go up quickly and stay at the limit, new queries are rejected.

PBI comes up to 500 queries, sometimes more, an average of 280 (per 5 minutes).

AAS S2

Somehow I didn't want it to be true that the AAS had done so badly and so I repeated the test with S2, the result was even worse.

These are of course only snapshots and many factors play a role, such as regions of the services, Azure fluctuations, allocated resources, etc.

RESULT graphics

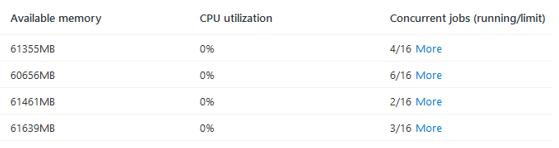

AAS Scale Out

I still didn't want it to be true and I tuned the AAS, because the test was already finished and only had to be started with one click.

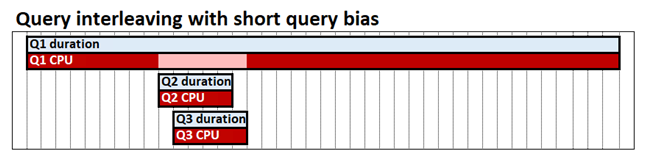

First I changed the query priorities so that shorter queries get resources even in the middle of long queries.

It will use the full memory very quickly, but at some point that hardly helps and there is hardly any improvement in the S2 either, so it must be because of the compute power to process more at the same time, so I have done a Scale Out .

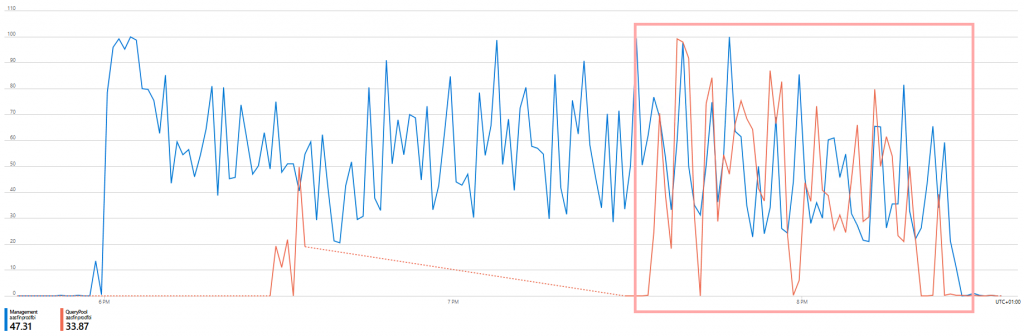

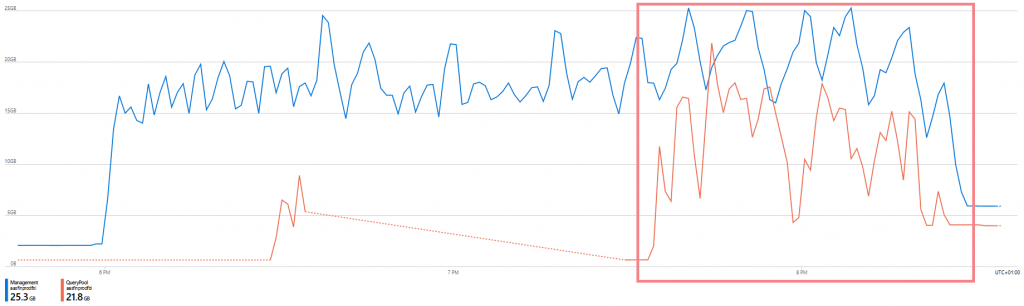

You can see immediately that the maximum possible 100 QPU are doubled with the scale out and the memory is also used by the replica.

Lo and behold, the throughput immediately comes close to that of Power BI Premium.

30 minutes under full load, the simultaneous queries are almost the same.

The performance is still better with PBI.

Conclusion

Power BI Premium can still operate the relatively small but complex model even with many accesses, some queries no longer come to the end user when the system is up, but most of them have a good throughput and response time.

The system should be able to deal with 500 users, with the planned 1,000 or more it will get exciting...

The AAS is not an alternative to this context, at least in the comparable variant.

An enormous increase of results you get from a scale out.

Power BI is much better, the main advantage of AAS is its easy scalability, at any time, as required - if the month-end closing is tight, you add 1-2 replicas for a few hours and switch them off completely at the weekend to save money.

Maybe I'll do a third part when the 1,000 users actually start - hopefully not with the title “Dream and Reality”...

Leave a comment